Leon Poltawski, PhD1, Meriel Norris, PhD2 and Sarah Dean, PhD1

From the 1Institute for Health Research, University of Exeter Medical School, Exeter and 2Centre for Research in Rehabilitation, School of Health Sciences and Social Care, Brunel University, London, UK

BACKGROUND: Intervention fidelity is concerned with the extent to which interventions are implemented as intended. Consideration of fidelity is essential if the conclusions of effectiveness studies are to be credible, but little attention has been given to it in the rehabilitation literature. We describe our experiences addressing fidelity in the development of a rehabilitation clinical trial, and consider how an existing model of fidelity may be employed in rehabilitation research.

METHODS: We used a model and methods drawn from the psychology literature to investigate how fidelity might be maximised during the planning and development of a stroke rehabilitation trial. We considered fidelity in intervention design, provider training, and the behaviour of providers and participants. We also evaluated methods of assessing fidelity during a trial.

RESULTS: We identified strategies to help address fidelity in our trial protocol, along with their potential strengths and limitations. We incorporated these strategies into a model of fidelity that is appropriate to the concepts and language of rehabilitation.

CONCLUSION: A range of strategies are appropriate to help maximise and measure fidelity in rehabilitation research. Based on our experiences, we propose a model of fidelity and provide recommendations to inform the growing literature of fidelity in this discipline.

Key words: rehabilitation; methods; research design; clinical trials; intervention fidelity; process evaluation.

J Rehabil Med 2014; 46: 609–615

Guarantor’s address: Leon Poltawski, University of Exeter Medical School, St Luke’s Campus, Magdalen Rd, Exeter EX1 2LU, UK. E-mail: L.Poltawski@exeter.ac.uk

Accepted Apr 28, 2014; Epub ahead of print Jun 17, 2014

Background

Intervention fidelity is an important consideration in the credibility of research findings (1, 2), but until recently has received relatively little attention in physical rehabilitation research (3, 4). Fidelity is the extent to which an intervention’s core components are implemented as intended (5). This includes not only the content of the intervention but also how it is delivered, for instance its intensity, how long it lasts, and who delivers it. Fidelity is particularly important in rehabilitation interventions because they are often complex, involving multiple components and contextual factors that may produce a variety of outcomes by summative or synergistic action (6). In clinical trials, if interventions are not implemented as intended, drawing conclusions about effectiveness can be problematic. For example, a small effect size observed in a trial might be a sign that the therapy under investigation is ineffective; but it could also be because it was not applied with sufficient intensity or skill, or because a client did not engage sufficiently with it. These factors might vary between study participants, or between centres in a multi-centre trial. If such threats to fidelity are not controlled, we cannot be sure if outcomes are due to the intended intervention or to variations in the way it is implemented. Variations in fidelity may account for conflicting findings between apparently similar studies. There is strong empirical evidence that levels of fidelity affect the outcomes of interventions (7), and so it is essential to consider fidelity issues in rehabilitation trial design.

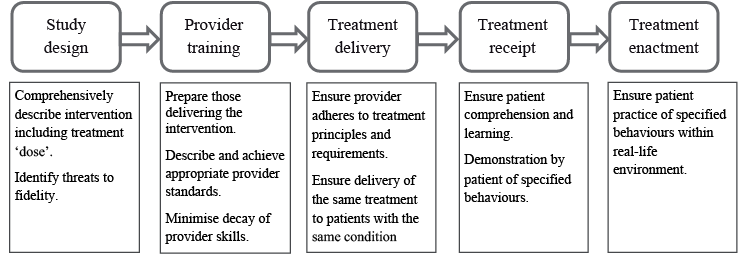

Guidelines regarding the development, evaluation and reporting of complex interventions recommend that fidelity should be addressed in all phases of the research process, from early development to translation into practice (8, 9). There have also been specific calls to address fidelity in rehabilitation research (1, 10, 11), but as yet there is little evidence of this happening (3, 4). Models of fidelity, and strategies to assessment and enhance it, have been developed and used particularly in psychology intervention research (2, 12–14). The US National Institute of Health (NIH) Behaviour Change Consortium has proposed a model of fidelity which identifies 5 areas in which fidelity should be addressed in behaviour change research: study design, the training of intervention providers, and treatment delivery, receipt and enactment (12) (Fig. 1).

Although this model of fidelity was developed for use in studies of psychologically-focused interventions, it may also have applicability in physical rehabilitation research. Rehabilitation often involves behaviour change, and contends with similar challenges of defining and quantifying treatment, and of accounting for factors such as practitioner skills and client engagement (6). In 2003, Henessey & Rumrill (1) highlighted the importance of fidelity in rehabilitation research. They identified threats to fidelity and proposed strategies for its enhancement, including standardising the intervention, using the same practitioner to deliver it, and monitoring contextual influences on outcomes. Since then, several rehabilitation studies have specifically addressed intervention fidelity (4, 15–17). They particularly focus on fidelity assessment by identifying the essential components of interventions, and expressing them in terms of the actions and behaviours of therapists and patients, which are used to generate observational checklists to rate fidelity. In one study concerned with low back pain (15), intervention components were classified as essential, compatible or forbidden. This facilitated the assessment of “differentiation fidelity”, which refers to the maintenance of important differences in treatment between two groups compared in a trial (12). In studies concerned with rehabilitation after stroke (4) and other disabling medical events (16), the impact of provider training, monitoring and feedback on intervention fidelity was investigated.

These studies have begun to delineate an approach to fidelity in rehabilitation research. However, they are particularly concerned with the assessment of fidelity in provider-training, and in treatment delivery and receipt; they give less consideration to the ways fidelity may addressed during the design phase of studies. Furthermore, the methods they developed were specific to particular health conditions or interventions, and may have limited transferability to other applications. Hence, there is considerable scope for further development of approaches to fidelity in rehabilitation research. The NIH model provides a suitable framework for this development, but may require adaptation to the concepts, contexts and language of rehabilitation, and more studies are required to inform this process.

The purpose of this paper is to illustrate how the principles of fidelity may be applied in the practice of rehabilitation research. We do this by describing our use of the NIH model to address fidelity in the development of a rehabilitation intervention trial. In the process we adapted the NIH model and this paper also proposes a new model of fidelity that may be more appropriate for use in rehabilitation.

Overview of trial development work

In accordance with guidance for the development and evaluation of complex interventions (9) we undertook a number of preliminary studies to inform the development of a trial protocol. These were concerned with an exercise-based rehabilitation intervention for long term stroke survivors, called Action for Rehabilitation from Neurological Injury (ARNI) (18). ARNI is a personalised physical training programme delivered by qualified Exercise Professionals, which is currently provided in several centres in the UK, either in group or one-to-one format. There is substantial anecdotal evidence of its physical and psychological benefits but a clinical trial is needed to generate definitive evidence. To inform development of a trial protocol, we conducted a suite of investigations:

A. Two mixed-methods before-and-after studies of ARNI training, delivered to long-term stroke survivors over 3 months: in one study, one-to-one training was provided twice-weekly (19); in another, weekly group-based training was offered (20, 21).

B. A survey of ARNI group-based programmes in the UK, involving documentary analysis, field visits and interviews with programme commissioners, providers, trainers and users.

C. Analysis of ARNI manual (18) and other documentation, discussion with developer, and observation of provider-training.

D. Synthesis of recommendations from practice guidelines relevant to community exercise-based programmes after stroke (22).

E. Stakeholder consultation: including focus groups with stroke survivors, discussions with clinicians, and convening a Public and Patient Involvement group.

F. Considering other studies on related issues including behaviour change techniques, promoting exercise self-management, and fidelity assessment.

These studies provided data and experience to develop the trial protocol and to identify strategies to enhance intervention fidelity, to mitigate the effects of possible threats to fidelity, and to measure the different facets of fidelity during the trial (Table I).

In subsequent sections, we describe how we addressed fidelity in the different phases of trial planning and conduct identified in the NIH model.

|

Table I. Data sources used to address fidelity in trial development |

|

|

NIH fidelity phase |

Contribution of preliminary studies to fidelity planning (data sources used) |

|

Study design |

Describing key ARNI elements and principles (A, B, C) Ensuring intervention meets current best practice guidelines (C, D) Developing intervention manual (A, B, C, D, F) Identify appropriate assessment methods and outcome measures (A, E) Identity process measures that might influence fidelity (A, B) |

|

Provider training |

Identifying key elements of provider training regarding ARNI (B, C) Identifying key elements of provider briefing regarding conduct of trial (A, C) Developing trainer materials, quality standards and minimum experience levels (A, B, C, D) |

|

Treatment delivery |

Distinguish core and flexible components of intervention (A, B, C) Identifying necessary resources to deliver intervention (A, B) Developing fidelity assessment instruments (A, F) Identifying threats to fidelity and possible strategies to mitigate (A,B,.F) |

|

Treatment receipt |

Developing study participant information materials (A, C, E) Identifying strategies to promote participant engagement (A, E) Developing fidelity assessment instruments (A, B, F) |

|

Treatment enactment |

Developing participant information materials (A, E) Identify factors influencing adherence and ongoing engagement in intervention (A, B, E) Developing fidelity assessment instruments (A, B, F) |

Study design

Trial design involves the development of a comprehensive description of the intervention, and identifying potential threats to fidelity. The intervention description should specify not only its core content, but also the way it is delivered and the contextual factors that may influence its outcomes (10). Ideally, interventions should be based on sound theoretical principles (9). However, while behavioural science has produced many theories on which to base the content of interventions, rehabilitation is not so well-served (23, 24). Moreover, the moderating influences of delivery issues and contextual factors (such as where treatments take place or the prior experience of providers) may have little articulated theoretical basis. Hence, other rationales for interventions are required. We were guided by principle of Intervention Mapping, another approach developed within the behaviour change literature, which suggests that rationales for interventions may arise from a range of sources (22). These include randomised control trials or systematic reviews concerning related interventions, practice guidelines, and consultation with stakeholders.

We used several sources to identify core components of ARNI that would be essential in the trial intervention, and those that might be included but were not essential. The practice guidelines were particularly useful in providing recommendations in areas that were not addressed in detail in ARNI documentation, such as how group programmes should be organised. The survey and consultations with stroke survivors and ARNI trainers provided insights regarding the aspects of ARNI programmes that might mediate or moderate its effectiveness, such as the approach and attitude of trainers and the provision of both group and one-to-one training. These factors could then be addressed both in the intervention description and during fidelity assessment. Drawing on multiple data sources helped provide a comprehensive definition of the intervention, but was also challenging in several respects. The continuous development of ARNI by its originator, and its adaptation to different contexts by programme providers, meant that identifying its core content was not straightforward. Also, synthesising data and prioritising findings from different sources is complex. However, the Intervention Mapping approach suggests strategies for some elements of this process, and it has been used successfully in planning other complex intervention trials (25, 26). In our ongoing work, these principles are being used to map the trial intervention in detail.

Provider training

The NIH model recommends that those providing the intervention should be prepared through standardised training, with a focus on skills acquisition and performance to defined quality criteria (12). For rehabilitation research, it has been suggested that preparation should focus on promoting particular provider behaviours, which can be assessed and corrected both during training and delivery of the intervention (10, 16). In our studies, ARNI training was provided by qualified and experienced Exercise Professionals, who had been prepared by participation in the ARNI Institute’s standard training and accreditation. This involved a 5-day knowledge and skills-based training course, supervised and unsupervised skills practice with stroke survivors, knowledge and skills assessment, and a case study report. They were also given written and oral briefings on the nature of our research, the format of the intervention (training session frequency, duration and number), and additional reporting requirements, including written reports on each training session and qualitative interviews before and after the intervention. The principles and content of the intervention were described in the provider training course, but the providers were expected to tailor the intervention to the needs of participants. This is an important consideration in fidelity, since it means that the content of the intervention is not identical for all participants. Rather, fidelity was operationalised as adherence to a set of key principles. This concept is addressed more fully below.

The involvement of stroke survivors as models during provider trainer enabled immediate assessment and feedback to the providers on their adherence to key ARNI principles. The trainers reported that working with people with stroke encouraged their belief in the value of ARNI and commitment to its principles. The trainers also saw the course as aiding their employability, partly because it was university-accredited. These are important considerations in enhancing fidelity, since “buying into” the intervention may affect the provider’s adherence to its principles (27).

Interviews with trainers and observation of training sessions suggested that more preparatory experience would be necessary before a trial, as there was a tendency to fall back on familiar activities and behaviours, some of which were not in accord with the ARNI approach. It was evident that the training itself required greater focus on the core behaviours required of the practitioners and clients, and the proscription of activities or behaviours (such as the provision of nutritional advice) that might detract or divert resources from core intervention components. More preparation of trainers was also required regarding their role in the research process, particularly collecting process and outcomes data, which was not done consistently.

In addition to provider training, we found that other elements of intervention preparation could also impact on fidelity. For example, in the study of one-to-one training, items of equipment such as adjustable platforms for progressing functional tasks and large wall-mirrors for visual feedback on client performance were not available in every location. Comments by trainers and clients helped to identify key resources such as these, and to consider the implications for subsequent location and delivery of the intervention in a trial. The availability of dependable transport was also found to affect fidelity, because it impacted upon session attendance levels in both the one-to-one and group-based programmes. Resource availability may moderate the effectiveness of interventions, and so essential resources must be specified, and their presence and use recorded, as part of fidelity assessment. This is particularly important if the location of the intervention varies for different participants, for instance by using several venues, as might occur in a multicentre trial.

Delivery, receipt and enactment

In the NIH model of fidelity in behaviour change interventions, implementation involves delivery, receipt and enactment of skills (12). We found these terms problematic in the rehabilitation context. “Delivery” and “receipt” imply that interventions are packages provided by practitioners and passively received by patients or clients. In rehabilitation, there is a dynamic interplay between practitioner and client, in which the behaviour of one affects and is affected by the other, resulting in co-creation of the intervention. This is particularly the case when programmes are explicitly based upon negotiated goals, but it also occurs in pre-specified interventions when practitioners employ and change strategies according to client circumstances and preferences, and where the nature of the intervention may evolve according to experience and discussion. “Enactment” means applying what has been learned in the intervention to real life situations. In many behaviour-change interventions, enactment is regarded as an desired outcome (13), but in rehabilitation it is typically a core part of the intervention itself, as restoration of function requires the client to practise exercises and skills in their daily life.

To address these issues, we conceptualised practitioners and clients as collaborators in implementing or “acting out” an intervention. Fidelity is then defined in terms of the essential activities and behaviours of practitioner and client, and of their interactional behaviour. These become the criteria by which fidelity is assessed. Several approaches can be used for the assessment of trainer and client behaviours, each with associated advantages and limitations (13, 27, 28): self-report (by clients and trainers) is resource-efficient and can provide multiple perspectives, but has potential for bias and inaccuracy; in-vivo observation enables contextual and environmental factors to be assessed but can produce reactivity-effects due to observation; video and audio recording of sessions enables repeated objective assessment by multiple observers, but may be resource-intensive. We explored their value and feasibility through:

• Trainer records of sessions (including goals, content, duration and dates of attendance)

• Client exercise diaries (including homework and personal reflections)

• Post-programme qualitative interviews with clients and trainers

• In vivo observation of training sessions by researchers

• Analysis of video recordings of training sessions by researchers.

We gave particular attention to the analysis of video recorded sessions, since this method can enhance the objectivity and reproducibility of fidelity assessment by using independent evaluators (28). We developed an assessment process involving: (i) completion of a Timed Observation Form when viewing the recording, noting the nature and duration of activities and the behaviour of trainer and client; (ii) using this information to complete a Summary Observation Form, in which the evaluator rated the session for fidelity to the core intervention components and essential ARNI principles. Selection of these components and principles was based on an analysis of ARNI documentation and the survey of existing ARNI programmes (referred to in the earlier section on intervention design).

The fidelity rating system was designed to take account of both the quantity and quality of implementation. Some reports of fidelity in rehabilitation trials indicate only the presence or absence of intervention components (4, 15), but effectiveness may also depend on the “dose”. In rehabilitation, dose is a multi-dimensional construct, encompassing factors such as the number of repetitions of an activity, its duration and intensity level – all of which may impact upon the therapeutic effect of the activity (29). Therefore all these components were addressed in the observation forms. We also aimed to address quality: in some studies, implementation quality has been assessed primarily in terms of how skilfully a practitioner employs a particular strategy, but we extended this principle to the behaviour of the client. Quality was operationalised in terms of the client’s performance of core activities and their engagement in interactive behaviours, such as negotiating session content and problem-solving. The fidelity assessment criteria combined such quality standards with quantitative dose information to produce a single rating score for each component of the intervention. The score represented the strength of evidence that the training session was faithful to the defined components and principles. The scoring system and accompanying manual (available from the corresponding author) were developed iteratively. They were evaluated by 4 individuals, who were familiar with the ARNI approach, using them to assess fidelity in a video-recorded training session.

Fidelity assessment by video analysis raised several issues requiring further attention. First, the grading process involved a degree of subjectivity for some important variables, for instance when judging the intensity of an exercise based mostly on body language. This might be addressed by ensuring the trainer asks the client regularly to grade their effort using, for example, the Borg scale of perceived exertion (30), or by physiological monitoring. However, the concept of intensity had no obvious applicability for some components, such as functional task practice, and in these cases dose may only refer to the number of repetitions. Second, the relative importance of intervention components and training principles was unknown and no weighting was used in the fidelity assessment. Incorporation of fidelity data into trial analyses might help elucidate the influence of individual components on outcome, and so inform future iterations of the grading scheme. We found good levels of agreement between raters on fidelity scores for most of the key components and principles, but we recommend more extensive evaluation (e.g. 14, 27) of the scheme’s validity and reliability before it is used.

Some fidelity data could be verified by cross-checking several sources. For example, trainer records and session observations showed good agreement on session content, although some important data regarding trainer behaviour and trainer-client interactions were only recorded by researchers. Home-based practice of exercises and strategies (“enactment” in the NIH model) is an essential component of ARNI, and this was also addressed in the fidelity assessment. Although trainers were expected to set and enquire about homework, trainer records and session observation suggested this did not happen consistently. Client exercise diaries were generally poorly completed and provided little usable fidelity data. Qualitative interviews enabled cross-checking of written records, provided some missing data, and suggested reasons for the poor completion of client diaries. These included misunderstanding of how they should be completed, forgetting to complete them, and their perceived burden. As a result of these findings, we are considering alternative fidelity-checking approaches such as simpler self-report forms, online versions, e-reminders to complete reports, and the use of accelerometry to objectively record patterns and amounts of physical exercise and activity.

A proposed approach to fidelity in rehabilitation research

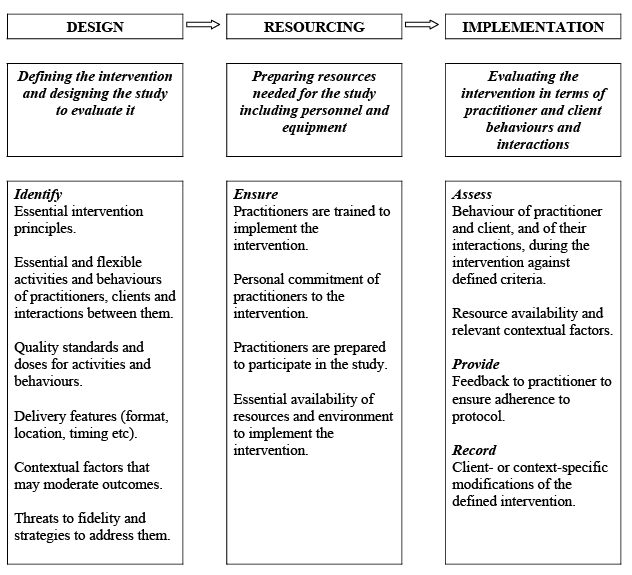

The NIH model of fidelity proved useful in identifying the phases in trial development where fidelity can be addressed. The examples we have provided illustrate how this can work in practice, some of the challenges involved, and strategies that can help enhance and measure fidelity. We found it necessary to adapt elements of the NIH model to more appropriately reflect the concepts and contexts of rehabilitation. As a result, through discussion and practical application of the concepts in the model, we developed a model that may be of value more broadly in rehabilitation research (Fig. 2). It conceptualises fidelity in 3 phases of research: intervention and study design, resourcing and implementation. These map onto the 5 phases of the NIH model, but augment and reconfigure them to suit their application to rehabilitation research.

As in the NIH model, “Design” involves development of the intervention itself and of the study used to investigate it. However, in our model a broader range of sources is suggested to inform this process. These include theoretical models and justifications for components of the intervention, along with empirical evidence from trials, practice guidelines, surveys of existing practice and stakeholder consultations. These are used to provide rationales for all components of the intervention; to inform writing of the intervention manual; and to provide specific contextual information that more general theoretical models cannot include. They also help identify threats to fidelity and strategies to mitigate them.

An important concern regarding the definition of interventions is that they encourage a standardised one-size-fits-all approach that is incompatible with effective clinical practice (14). Indeed we faced this dilemma in our development work, as ARNI is a personalised programme based on individual characteristics and preferences. One way of addressing this issue is to develop an algorithm defining the intervention components and doses that may be used with each client, depending on their characteristics. Fidelity is then defined as the extent to which the algorithmically-derived intervention is implemented. Another approach is to focus on the extent to which the principles of the intervention are adhered to, rather than its content and delivery (31). These variables are still measured as evidence of adherence to principles, but they may legitimately vary according to individual circumstances without threatening fidelity. We adopted this approach in assessing fidelity to the ARNI intervention. Both approaches allow for personalisation of the intervention while retaining the concept of defined standards against which fidelity is assessed.

In our model, “Resourcing” includes provider-training, as in the NIH approach, but also recognises that physical resources and particular environments may be required for the intervention and should be considered in fidelity planning and assessment. Finally, “Implementation” replaces the NIH concepts of treatment delivery, receipt and enactment, and focuses instead on the individual and interactional behaviours of practitioner and client that are necessary for intervention effectiveness. Implementation encompasses enactment, the transfer of activities by the client to their real-world context, because this is a core component of most rehabilitation interventions. However, in some situations enactment may also be regarded as an outcome. For instance, in an ARNI-based intervention, ongoing and more regular and engagement in unsupervised physical exercise is a desired outcome.

By attending to the elements of fidelity identified in our model during study development, a rigorous and comprehensive treatment of fidelity can be built into the research protocol. If, as in our case, the development work includes feasibility studies of the intervention, these may be used as test-beds for fidelity assessment in the Implementation phase, and their findings can feed back to the Design and Resourcing phases. Hence development becomes an iterative process resulting in more rigorous and effective ways of both enhancing and assessing fidelity in the subsequent trial. A toolkit presenting practical strategies to assess and enhance fidelity in each phase, and identifying fidelity-related threats and outputs, is available as an additional file (http://www.medicaljournals.se/jrm/content/?doi=10.2340/16501977-1848).

The model was developed in the context of our particular study, but it is framed in generic terms to facilitate its application to other areas of rehabilitation research. It represents an initial iteration, and its fitness for purpose requires further evaluation. The model’s strength is its comprehensiveness, but individual components would benefit from more detailed elucidation and adaptation to the context of rehabilitation research. For example, the construction of detailed and replicable intervention descriptions, which are essential for fidelity, would benefit from the availability of a controlled terminology or taxonomy of rehabilitation. Such taxonomies are beginning to be developed (32, 33), and their potential value in fidelity enhancement and assessment needs to be explored. Further work is also needed on methods of incorporating fidelity data into study analyses to ensure that conclusions are robust, appropriate and transparent. Although the model needs further development and evaluation, we suggest that it may be a useful resource to facilitate consideration of fidelity in rehabilitation research.

Conclusions

This paper illustrates some of the issues and challenges of addressing fidelity at all stages in the planning and conduct of rehabilitation effectiveness studies. Our experience demonstrated the utility, as well as the limitations, of the NIH model within the rehabilitation context. We have proposed a model for fidelity in rehabilitation research. It requires further development and testing, but we hope it will act as a catalyst for more consideration of this important methodological issue, and so improve the rigour and credibility of rehabilitation interventional research.

Acknowledgements

With thanks to the following for invaluable assistance in aspects of the work reported in this paper. Tom Balchin at the ARNI Institute and Sian Brooks, ARNI trainer, Cherry Kilbride and Amir Mohagheghi at Brunel University, and Vicki Goodwin at the University of Exeter. Thanks also to the reviewers for helpful suggestions to improve this paper.

This paper presents independent research, partially-funded by the National Institute for Health Research (NIHR) Collaboration for Leadership in Applied Health Research and Care (CLAHRC) for the South West Peninsula. The views expressed in this publication are those of the authors and not necessarily those of the NHS, the NIHR or the Department of Health in England. Publication of this paper was assisted by the financial support of Brunel University London.

References